A new report is recommending that vulnerable computers be barred from accessing the internet — but the idea risks creating more problems than it solves.

Earlier this week, the Standing Committee on Communications tabled a report on its yearlong inquiry into cybercrime. The report, headed Hackers, Fraudsters and Botnets: Tackling the Problem of Cyber Crime makes 34 recommendations aimed at improving computer security in Australia. One of them in particular — a proposed industry code requiring Australians to install and maintain antivirus and firewall software to access the internet — has sparked some debate.

To assess the merits of that recommendation, it is necessary to understand how ISPs are presently (mostly not) regulated in the area of cyber security, and what exactly the report proposes to change.

The Internet Industry Association (IIA), a group representing ISPs, is largely responsible for writing the codes that regulate them. In relation to cyber security, the IIA recently released a voluntary code of practice titled icode. Among other things, this code lists a number of steps that ISPs may take when they become aware of malware-infected machines on their networks (such as notifying the user or disconnecting the user from the internet), but it leaves it up to the relevant ISP to decide which course of action is appropriate in the circumstances.

The current code is thus doubly voluntary. First, the code itself is voluntary, so ISPs can choose not to comply with it at all, and, second, ISPs that choose to comply with the code are not required to take any particular steps in relation to malware-infected machines on their network. That is, the current code does not provide for any mandatory steps to be taken in relation to malware-infected machines on ISPs’ networks. And in no way does it require users to install and maintain antivirus and firewall software.

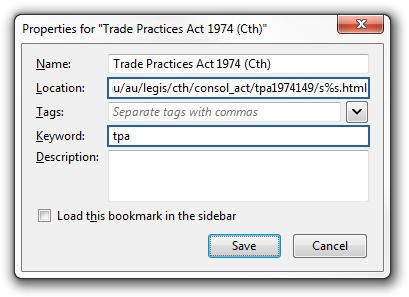

The first thing that the new report proposes to change is to have an industry code that is registered. The Australian Communications and Media Authority (ACMA) presently has a power under the Telecommunications Act 1997 (Cth) to register industry codes that deal with certain things. Where such an industry code is registered, ACMA can direct an ISP to comply with the code. Failure to comply with such a direction exposes the ISP to a civil penalty of up to $250,000 per breach. A registered industry code is thus effectively mandatory.

Next, if the recommendations were adopted, ISPs would be required to take certain mandatory steps when malware-infected machines are found on their networks. Specifically, they would be required to notify the relevant users and implement graduated access restrictions (including disconnection) until the relevant machines are cleaned. Importantly, the report does not propose to require immediate disconnection of users whose machines are infected with malware, but rather a graduated response, where disconnection would presumably be the last step. This is important in particular because removal of malware often depends on the installation of up-to-date antivirus software, which is usually obtained online.

Most notably, though, the proposed code would require ISPs to include a contractual term in their acceptable use policies requiring users to install and maintain antivirus and firewall software before accessing the internet. It is this requirement that has raised the most eyebrows.

The most readily apparent problem with this recommendation is that enforcement would be impractical. The proposed code would require a new term in the contract between the ISP and the user, which could only be legally enforced by the ISP (and not, for example, by ACMA). It is not clear whether ISPs would be motivated to enforce these new contractual obligations. Most ISPs’ acceptable use policies currently prohibit the use of their services to infringe copyright, yet as the content industry will tell you, ISPs have not exactly been zealous in policing that part of their policies.

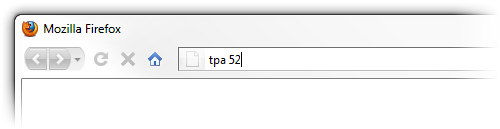

But even if the code required ISPs to actually enforce their contractual rights, for example by disconnecting users who did not comply, it would not be practical for ISPs to verify that their users have up-to-date antivirus and firewall software installed. Arguing that ISPs could manage this task, prominent cyber-security consultant Alastair MacGibbon has made the following point:

There is software available which could be on end-user machines that would allow my ISP, as I log in, to check that I have my firewall turned on, that I have an antivirus that they approve or recommend installed on my computer, and that my operating system and browser are patched — and if those things aren’t met then [my ISP would not] give me [access].

However, such software only works with certain antivirus and firewall products and only works on certain operating systems. And it would put ISPs in the position where they would have to approve particular antivirus and firewall software before users could use it, significantly limiting consumer choice. Approaching the issue of computer security this way appears to create more problems than it solves. Should ISPs be allowed — let alone forced — to dictate what antivirus and firewall products their users may use and what operating systems they may run? And should users be forced to install software from their ISPs that reports back what software they are running to their ISPs?

The other problem with the recommendation is that it is not clear what exactly users would be required to do to comply with these new contractual obligations. Would antivirus and firewall software need to be installed on all devices connected to the user’s network? Antivirus and firewall software for iPhones and iPads, for example, is not available or even possible presently. And there are many other devices for which such software is not as readily available as it is for Windows, including computers running Mac OS X and Linux (arguably because those devices do not need them to the same extent).

The question which then arises is whether any of this is really necessary. Most broadband connections are already provided using a modem-router that doubles as a firewall, and Windows itself (like most other operating systems) already includes a firewall that is on by default. While comprehensive antivirus software is not included with Windows itself (or most other operating systems), free solutions, including Microsoft Security Essentials are readily available. It is not clear how including a contractual term that most users will never read would be any more effective at encouraging use of appropriate security software than would educating users about the need for such software at the time they are provided with internet access (and perhaps via periodic reminders).

Notwithstanding the somewhat controversial recommendations discussed above, it is worth mentioning that the report does cover a lot of ground and makes many other good recommendations. They deal with three areas: aggregation and distribution of data about cybercrime, updating criminal and civil enforcement laws, and educating the public about computer security.

The report recommends setting up coordinated systems to gather and share information about cybercrime, with the aim of using that information to improve responses to online threats. Among other things, this would include developing a reporting system aimed at consumers and small and medium sized businesses, consisting of a centralised portal for reporting cybercrime (including malware, spam, phishing, scams, identity theft, and fraud) and a 24/7 reporting and helpline.

Criminal laws dealing with cybercrime would be reviewed and updated where necessary, and the Australian Consumer Law would be amended in two notable ways. First, consumers would gain a specific right to sue for unauthorised installation of software that monitors, collects, and discloses information about consumers’ activities (ie, spyware). Second, consumers would gain a right to sue a manufacturer for loss caused by a product that was released onto the Australian market with known security vulnerabilities.

Finally, and perhaps most importantly, the report sets out steps to improve community awareness of computer security issues. It does this in two ways. First, the report proposes a ‘public health style campaign’ to deliver messages about computer security issues as well as appropriate behaviours and technical precautions that users should take. Second, the report recommends specific changes to the law requiring, for example, the provision of security information about certain products (such as computers and routers) to users at the point of sale, and requiring also that certain products be designed to prompt and guide users to choose more secure settings (such as setting strong encryption on your wireless access point to secure your network).

While the report contains certain controversial recommendations, that’s normal for reports like this one. Meanwhile the many reasonable recommendations the committee makes — in particular the points about educating users — are a valuable contribution and deserve consideration.